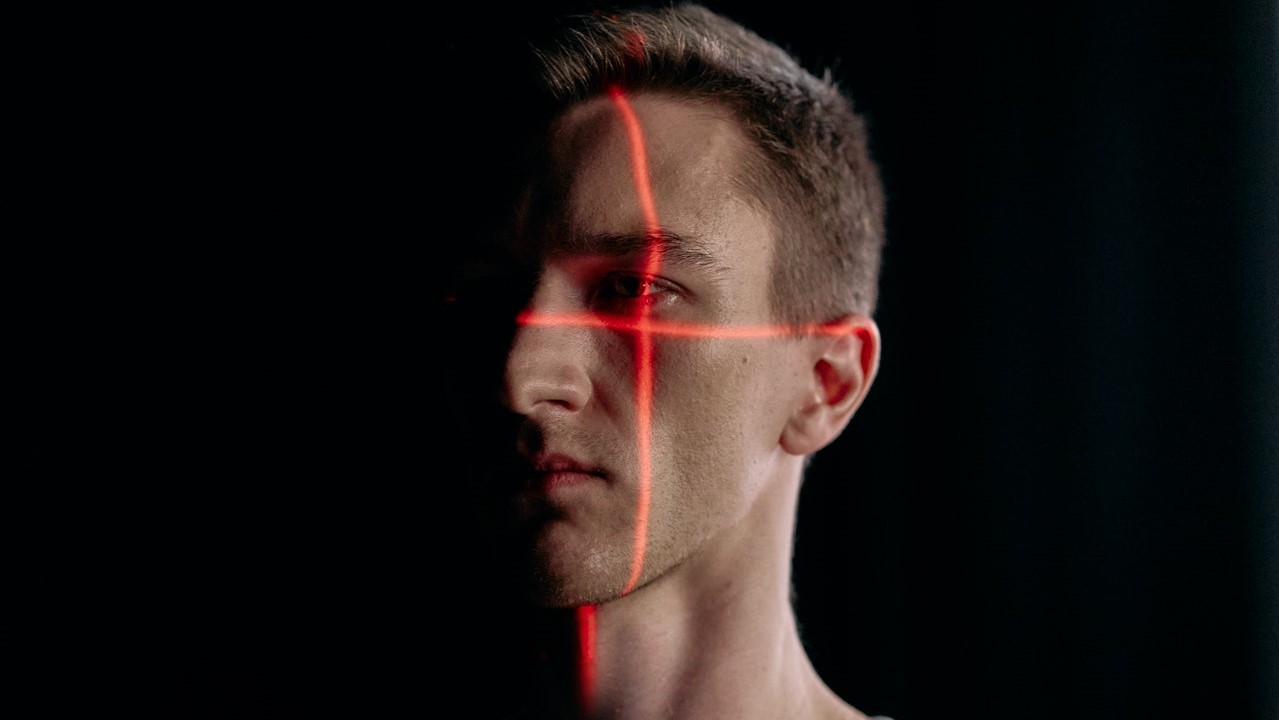

Facial Recognition – It’s Use, The Myths and The Legitimate Concerns

How is Extensive is the use of Facial Recognition – and how secure is it?

The Guardian has recently reported on the growing use of live facial recognition by police forces across England and Wales. The technology has been described by government as one of the most significant developments in crime detection since DNA matching, but it has also raised serious questions about privacy, bias and the accuracy of evidence used in criminal cases.

From a criminal defence perspective, facial recognition must be treated with caution. While it may assist police in locating suspects, it should not be viewed as infallible. Like any form of evidence, it must be properly tested, lawfully obtained and fairly used.

How facial recognition works

Facial recognition technology compares an image of a person’s face against images held on a database. It analyses facial features such as the distance between the eyes, the shape of the face, and other identifiable landmarks.

There are two main forms. Retrospective facial recognition is used after an incident, comparing images from CCTV, phones, doorbell cameras or social media against police databases. Live facial recognition scans people in real time, often in busy public spaces, and compares faces against a police watchlist.

If the system identifies a possible match, officers nearby may be alerted and asked to decide whether to stop or arrest the individual.

The myth of certainty

One of the biggest risks with facial recognition is the assumption that a match is conclusive. It is not. A facial recognition alert is not the same as proof of guilt.

The technology produces possible matches based on probability. Human officers still have to assess whether the image is accurate and whether further action is justified. Mistaken identification remains a real concern, particularly where images are unclear, lighting is poor, or the person’s appearance has changed.

In criminal defence cases, it is therefore vital to examine exactly how the match was produced and whether it was reliable.

Privacy and public surveillance

Live facial recognition involves scanning large numbers of people who are not suspected of any offence. The Guardian reported that millions of faces have already been scanned by police deployments, often in town centres and at public events.

Police say that images of people who do not match a watchlist are deleted. However, the scale of scanning still raises important questions about privacy, necessity and proportionality.

From a defence perspective, there may be arguments about whether the deployment itself was lawful, whether the watchlist was properly compiled, and whether the use of the technology was justified in the circumstances.

Bias and accuracy concerns

Facial recognition has historically raised concerns about racial and gender bias. Earlier systems were criticised for being less accurate when identifying people from minority ethnic backgrounds and women.

Although the technology may have improved, concerns remain. Accuracy can depend on the software used, the quality of the images, the make-up of the watchlist and where deployments take place.

If a person is stopped or arrested following a facial recognition alert, the defence may need to scrutinise whether bias or error played any role in the identification.

Key defence considerations

Where facial recognition evidence forms part of a prosecution case, defence solicitors may need to consider:

- whether the technology was lawfully deployed;

- whether the watchlist was accurate and properly authorised;

- whether the image quality was sufficient;

- whether the match was independently verified by officers;

- whether the defence has received full disclosure about the system used.

Disclosure is particularly important. The defence may require information about the software, confidence thresholds, false match rates, officer decision-making and any body-worn footage from the stop or arrest.

Facial recognition and arrest

A facial recognition alert should not automatically lead to arrest. Police must still have lawful grounds and must satisfy the normal arrest criteria, including necessity.

In some cases, the defence may argue that an arrest was based too heavily on a technology alert rather than proper human assessment. If the stop, search or arrest was unlawful, this may affect the admissibility of evidence obtained afterwards.

Due process in a developing area

The legal framework around live facial recognition remains developing. Oversight is currently spread across several bodies, and the government is consulting on a new legal framework.

Until clearer rules are in place, courts will play an important role in deciding whether police use of this technology is lawful, proportionate and compatible with fundamental rights.

Conclusion

Facial recognition may become an increasingly common part of policing in England and Wales. Used properly, it may assist investigations. Used carelessly, it risks mistaken identification, unfair arrests and privacy breaches.

For those accused of offences following a facial recognition match, the key issue is simple: the technology must be tested, not trusted blindly.

Criminal defence solicitors have a vital role in ensuring that facial recognition evidence is scrutinised carefully and that due process is followed at every stage.

How We Can Help.

If you have any questions regarding the use of facial recognition technology or any legal representation in court then don’t hesitate to call us now on 0161 477 1121 or email us.